Determine your vSphere storage needs – Part2: Performance

Besides capacity, performance is probably one of the most important design factors.

- IOPS

- Latency

The first step is to determine your performance needs in IOPS. This is probably the hardest part. In order to determine your IOPS needs, you have to know what type of virtual machines or applications, and there corresponding I/O characteristics your going to run on your storage solution.

You can take 2 approaches to determine this:

- Theoretically

- Practical

In the theoretical approach categorize the type of virtual machines you’re going host on your storage solution. For example:

- Database

- Windows

- Linux

- Etc

Then you determine the corresponding block size, read/write ratio, and if it’s sequential or non sequential data per type.

In the practical, or real live method, we’re going to use a tool to determine the type of IO issues by your current virtual machines. There are a couple of tools available for this. One of them is PernixData Architect. This tool gives in depth information about your storage IO workload including read/write ratio and which block size is used.

Of course you can use vCenter to see your current read/write ratio, but not the block size which is used. You even can use ESXTOP in combination with perfmon to determine your IO workloads. The last option isn’t the most easiest way, but it’s a option if you don’t have a vCenter or budget for Architect.

You can make a table like this.

| Type | #VMs | Blocksize #KB | Avg. #IOPS | %READ | %WRITE | Sequential | Note |

| Windows | 2000 | 4 | 50 | 60 | 40 | No | Basic application servers |

| Linux | 1000 | 4 | 50 | 60 | 40 | No | Basic application servers |

| MS SQL | 4 | 64 | 1000 | 65 | 35 | No | 2 for production, 2 for DevOPS |

Note: Values are only a example. Your environment can (and probably will be) different.

So, why want you to determine the read/write ratio and block size?

Every storage solution has his own way of writing data to the disks. The performance depends on the block size, read/write ratio and RAID-level (if any) used. A Windows virtual machine can use a block size of 64k. When a storage solutions write data in 4k block. 1 IO from this virtual machine will issue 16 IO’s on the storage. If your storage solution uses RAID6, you have a write penalty of 6. Ok, so in this example. When a windows guest issues 1 64k IO, this results in (16*6) 96 IO’s on your storage. Hmmm, kind of make you think not?

Nowadays, every storage system had some type of cache onboard. This makes it hard to determine how many IOPS a storage system can deliver. But remember, a write IO can be handled by the cache. But a read IO, which is not already in cache, needs to be read from disk first.

Second, you have to determine the average latency you want to encounter on your storage solution. Every storage vendor will promote his solution as a low latency storage. This depends on where you measure this latency.

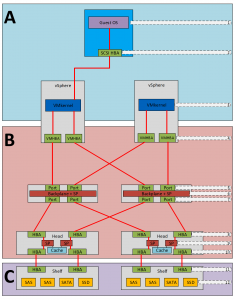

Below a overview where latency is introduced in a SAN or NAS solution.

As you can see, there are 12 places where latency is added. Of course, you want the lowest latency in the virtual machines. As this hard to determine, if you have 5000+ virtual machines, the next best place is the VMkernel.

Again, there a several tools to determine the read and write latency in the VMkernel. ESXTOP, vCenter of tools like Architect are great examples.

In my opinion these are the maximum average latency you want to encounter.

| Type | Read | Write |

| VMs for servers | 2ms | 5ms |

| VMs for desktops or RDS | <1ms | <2ms |

As always, lower is always better.

So, how do you determine which is the best storage solution for your needs?

Talk to your storage vendor. Give the table you’ve created and ask them which type and model suites your needs, and how to configure the storage solution. This way, your sure that your getting the right storage for your needs. And if, in the future, you’re encountering storage problems, you can check if the storage solution is performing according the requirement you discussed with your storage vendor.